Visualizing neural population activity

View the time-varying activity of three neurons in population space

From neuron to population

Traditionally, when we want to assess how a single neuron responds to a stimulus, we can plot its PSTH, or its average firing rate over time. However, neurons don't act in isolation, so it's important to think about how these PSTHs look across a population of multiple neurons. One way to view this so-called "population activity" is to plot the firing rate of each neuron on its own axis. (If you have more than three neurons, you could first apply a dimensionality reduction technique like PCA and plot the activity of the first three prinicipal components.)

Visualizing population activity can reveal interesting geometries that aren't obvious just from the PSTHs of individual neurons. You can play around with the parameters of three neurons' PSTHs below and see how the resulting population picture changes. Click the buttons for presets to make the activity look like a snowflake, a spider, a gramophone, or a head massager. Click and drag the 3D plot to change the perspective. An explanation of the parameters is at the bottom of the page.

Modeling assumptions

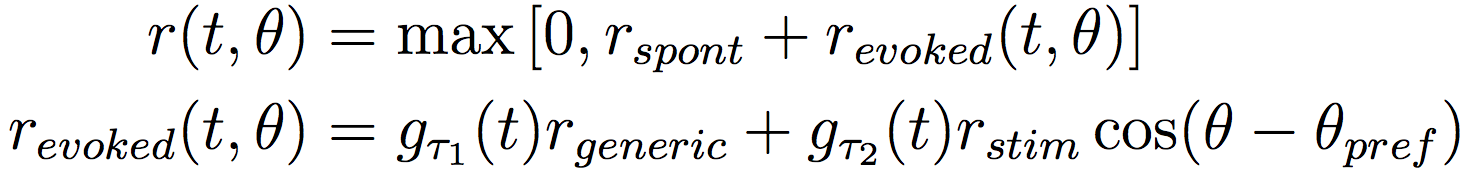

Above, each neuron's time-varying firing rate (or PSTH) in response to a stimulus θ is modeled as:

The idea is that when a stimulus (θ) is shown, a neuron's firing rate gradually increases from spontaneous firing (r_spont) to evoked firing (r_evoked). The evoked firing has two components: a generic, untuned response (r_generic), and a cosine-tuned response (r_stim). Above, g_τ1(t) and g_τ1(t) are gain terms that ramp up from 0 to 1, so that τ_1 and τ_2 control how quickly the firing rate responds to the stimulus.

Each neuron has its own preferred stimulus (θ_pref): 45º, 135º, and 180º, respectively. You can change these preferred stimuli as well as the other PSTH parameters with the controls above.

Actual neural data

Coming soon.